Performance Enginnering of the Confined Unconfined Aquifer System Model (CUAS)

Introduction

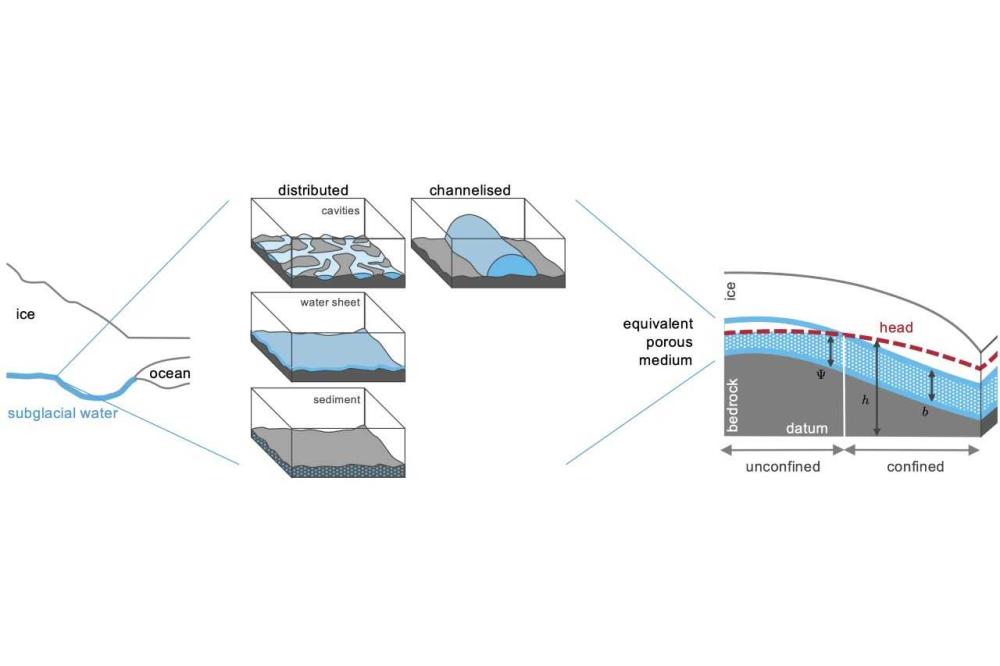

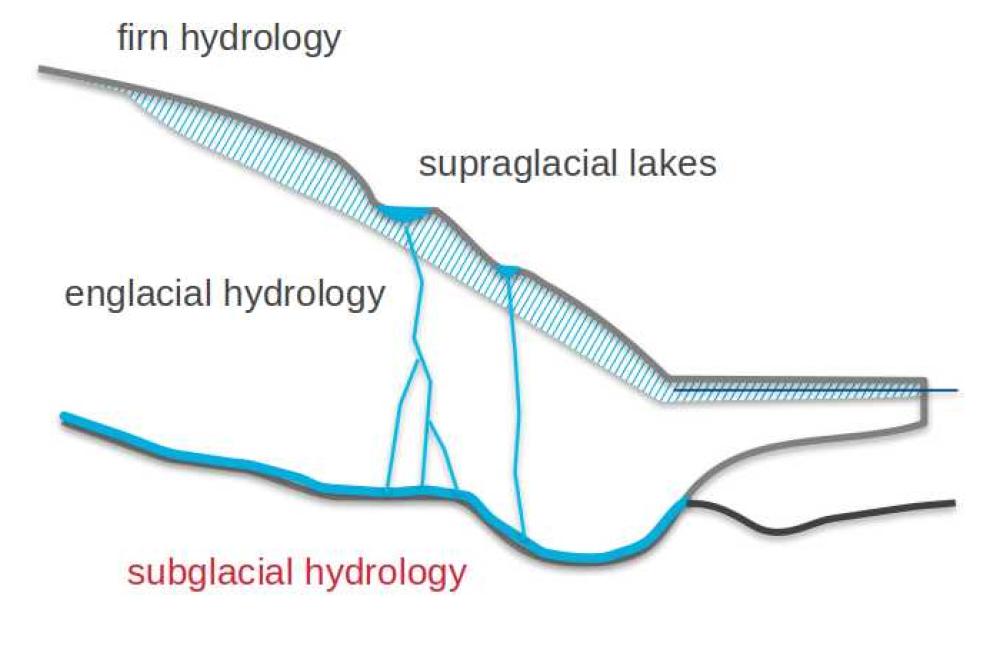

In the context of earth system modeling the simulation of ice-sheets is an important aspect. Ice-sheet modeling contains the simulation of ice-sheets as well as the subglacial hydrology, which is important, because the subglacial water effects the movement of the ice-sheet and contributes to the global sea level rise. The simulation of water in huge areas as entire Greenland is not trivial. The confined and unconfined aquifer system model (CUAS) is an efficient approximation of the hydrology equations. The model is originally presented in a serial demo version written in Python and does not run fast enough for realistic scientific setups. In this project we aimed to implement a scalable and fast version of the existing model using C++ and MPI. Our Software is called CUAS-MPI. Finally, we implemented an experimental runtime coupling of CUAS-MPI with the Ice-sheet and Sea-level System Model (ISSM) using the preCICE-library.

Methods

We implemented CUAS-MPI from scratch. To ensure numerical correctness of the process parallel version, we derived data from the previous serial implementation, which we used in test cases, which we run in our Continuous Integration Pipeline running on the Lichtenberg HPC System. We use the Portable, Extensible Toolkit for Scientific Computation (PETSc), written in C, to profit of the very good scaling of the existing algorithms and future improvements of the library. To ensure correctness and clear usage of the library features we implemented our own object-oriented interface for the PETSc data structures. Data is read and written in NetCDF format using standardized libraries. Our timings are measured by code instrumentation using Score-P with individual filters for low overhead measurement.

Results

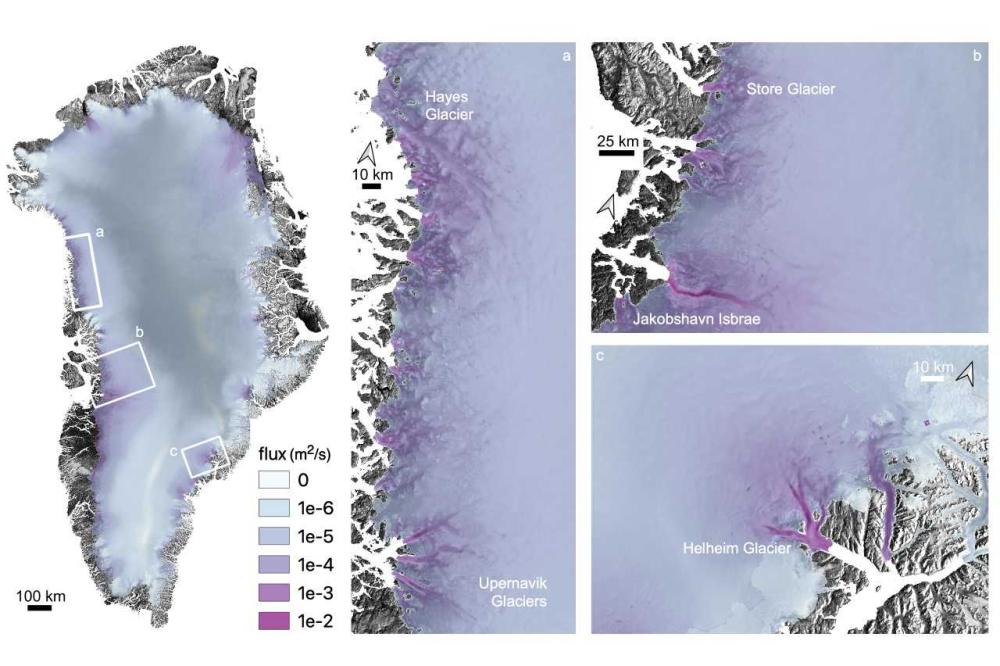

The object-oriented software design of CUAS-MPI and test driven software development workflow resulted in an efficient way of implementing a parallel version of CUAS. The scaling of CUAS-MPI allows to calculate the hydrology of the Greenland ice-sheet even on high resolutions. We already run simulations, which show promising physical results and will be further elaborated by domain scientists.

Discussion

The newly developed implementation of the confined unconfined aquifer system model shows sufficient scaling up to at least 3840 MPI processes. This scaling allows CUAS-MPI to use a significant amount of resources on an HPC system as the Lichtenberg 2. The highest throughput, amount of simulation years computed per day, is in relation to the throughput of the related Framework Ice-sheet and Sea-level System Model. Thereby, it is interesting for a wide community.

Furthermore, the coupling CUAS-MPI with simulations like ISSM is possible without causing waiting time. The current prototype of the coupling shows the huge modeling potential of runtime coupling. It is a promising approach, which has to be investigated further.

In the next steps, we extend the framework with minor features and create improved setups for Greenland and Antarctica. A major improvement will be the feature complete implementation of a runtime coupling interface for CUAS-MPI and ISSM.